I recently bought a new laptop, something that I do every year or so. And the recent release of Windows 7 gave me a good excuse to do so. But this new purchase also gave me another opportunity to do something that I’ve been thinking about for a while, the opportunity to set up my machine in a fashion that will improve my ability to maintain my development environment.

Here’s the problem. Over time, in some cases in as little as three or four months, my computer performs noticeably slower than when it was first configured. Eventually it gets so bad that I need to reinstall my operating system and all of my software and tools. For me, this can take days.

Part of my problem is that I need quite a few different versions of a number of different programs. For example, I currently support a number of applications written in Delphi 7, Delphi 2007 (both Win32 and Delphi for .NET), Delphi 2009 and Delphi 2010, Visual Studio 2008 (including Delphi Prism). (I am also asked to teach classes in other versions of Delphi, such as Delphi 2006, or even Delphi 5.)

In addition, I use a variety of database servers, including Advantage Database Server and SQL Server, and these must be installed as well. And then there are the various tool sets, set as third-party component sets for the various applications I support, source code control, utilities, and the list goes on and on.

Actually, it’s much worse than it sounds. Let’s take Delphi 2007, for instance. Once you install Delphi 2007 and it’s help files, and register it as a legitimate copy, you must also download and install the various patches. I think that the total time, from start to finish, to get Delphi 2007 installed is something on the order of 5 hours.

And don’t get me started on Visual Studio 2008. In addition to the core development environment, there were several service packs, as well as updates such as Silverlight 3, and so forth. These needed to be downloaded and installed. VS 2008 must have taken a total of 8 hours.

Believe me, I’ve gotten pretty good at configuring my system, but this is a waste of time. Why can’t I just install it all once and be done with it.

I’ve tried to solve this problem in the past. One of my approaches was to carefully install everything I needed, and then make a good, clean image using something like Acronis True Image or Norton Ghost. And while this works, somewhat, it isn’t perfect. After restoring from an image, there is still a lot to do to get a system back into shape.

The fact is, that “clean” image you create early on isn’t perfect. It doesn’t have all the nifty tools you’ve picked up in the intervening months since you created the image. Nor does it have the latest third-party components that you have added to some of your more recent development projects.

Incremental images are not the answer, either. Once you start using your system, things get progressively worse, performance-wise. As a result, while that pristine, original image that you created, once restored, will perform very nicely, those incremental images you’ve been making along the way each contain some of the “gunk” that has been eating away at your performance. Restore from one of those and you’re starting with a non-optimal setup (and you will still need a day or so to get things really back to where you need them).

A Possible Solution

Over the past year I have been playing with an idea that is not so original. Most of us use products like VMWare Workstation or Virtual PC to create virtual installations of guest operating systems. Most often we use these guest OSes to install beta software, so that it can run in a clean environment, without having to worry about it doing something ugly to our primary OS (host operating system). Once testing is done, we simply delete the guest operating system and go about our way.

Well, sometime last year I bought a copy of Windows XP Pro, and installed it as a guest OS under VMWare. I then installed Delphi 2007, as well the various support tools that I use. I used this guest to compare how Delphi (and then other tools) worked under XP versus Vista (which I was using as my host OS).

This was all very educational, until at one point something weird happened to my Delphi 2007 installation under my host (personally, I blame the Internet Explorer 8 installation, but I’ll leave that speculation for another article). At the time I was on the road, working for 10 days at a client’s site. And, I didn’t have my RAD Studio 2007 installation disks with me.

Fortunately, I was able to load the guest OS, and continue developing without missing a beat. I simply retrieved a clean copy of the source code from the version control system, and off I went.

A colleague of mine and I talked about what had happened at length, and came to the same conclusion. Maybe I should isolate my development environment from the host operating system as a matter of practice. Furthermore, maybe I should isolate each of my different development environments from each other. Maybe, I thought, this would decrease (I wanted to say eliminate, but that would be blatant hubris) the “gunk,” and reduce the opportunity for incremental decay of performance.

The Virtual Road is Paved With Good Intentions

Here is what I did. I bought a fast computer with loads of memory. This machine rus an Intel Core 2 Quad Q9000 CPU running at 2 GHz. (Importantly, this chip supports hardware virtualization.) The machine also has 8 GB of DDR 3 RAM. Plenty of memory, plenty of cores, I was good to go.

From here, I installed onto my host operating system, which was Windows 7 64-bit Home Premium, just that stuff that an ordinary computer user needs, and nothing else. I’m talking about basic stuff, like a word processor, an email client, a browser, antivirus, backup/restore software, a Twitter client, iTunes, and most importantly, virtualization software, which in this case, was VMWare Workstation 7.0. Once all of this was installed, and any available updates applied, I made a nice clean image of this base.

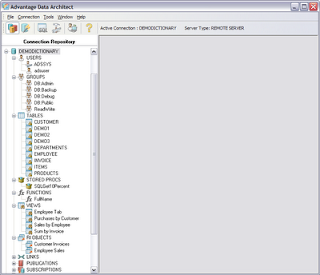

Next, I went to work on the guest operating systems. And I was very systematic about this. First, I created a guest OS with the absolute basics: Windows 7 64-bit Ultimate and antivirus. After installing all updates, I made a full cloned of this guest OS. Onto the clone I installed my very basic stuff that I need in all of my development environments, including version control software, various utilities (like SysInternals Suite), as well as Advantage Database Server and SQL Server (hey, I’m a database guy. No matter what I’m doing, I’m going to need a database). Let’s refer to this virtual machine as “Database Base.”

Now I’m ready for the big time. I cloned Database Base, and installed onto this clone a copy of Delphi 2010. I created a second clone of Database Base and installed Visual Studio 2008 (that took an entire day). In all, I’ve got about seven of these development environments so far.

I also have a nice, 500 GB, portable, external eSATA drive (this laptop supports eSATA, which is about three times faster than USB 2.0). I backed up the image of my host OS, as well as all of the nice new guest OSes, to this drive.

Despite the limitations, which I’ll share with you in a moment, this is a pretty nice setup. First of all, since I don’t actually develop in the host, and have only limited software installed on it, I gain two benefits. First, the host is small, and really, if I had to install it from scratch, it would take hours, not days. And, since I have a nice, clean image of the host, I can actually restore it in a matter of minutes, if necessary.

The second benefit is that there is little going on in the host. Sure, I end up installing necessary evils such as Goto Meeting, Adobe Air, and other stuff like that, but it’s limited, since I am not developing in the host. And, so far (it’s been a couple of months now), the host is showing little signs of slowing down.

And it’s working out fairly well with the virtual machines, so far. Performance is nice I’m allocating 3 GB of RAM to each virtual machine, and this give them some room to stretch, while permitting me to run two of them simultaneously without choking the host OS (though, frankly, things do get slow when the host has only 2 GBs to play on, so I try not to run two guests at a time).

And here’s the great thing. Since I’ve got the individual VMs backed up on the eSATA drive, I can restore them as well. In fact, if my idea works as planned, I should be able to migrate these VMs to my next machine, meaning that I may not have to install RAD Studio 2007 ever again. (I wish it were true, but I know in my heart that it is a lie. I know I’ll have to install RAD Studio 2007 again, but just not as many times as I would had I not taken this particular route.)

There are two additional, and compelling, advantages that I now have. First, if something awful happens to one of my development environments, I’ve got a quick solution. For example, if, when testing a new routine that removes an old key from the Windows registry I accidentally delete every ProgID, no worries. I simply copy the backed up guest VM file from my eSATA drive (which, of course, I have backed up to another storage drive as well), and I’m cooking with gas (this means that I’m back to work quickly).

The second advantage is this. When I get to the point of shipping a product (or hit a major milestone, or whatever), I can make a backup of the VM that I used, and I’ll have that exact environment forever. If I ever need to return to the precise installation of service packs, updates, component sets, and the like, that was used to create that special build, I’ve got it, right there, in that virtual machine that I’ve saved (and backed up, of course).

But It’s Not Perfect

I wish I could say that I’m completely satisfied with this solution, but I cannot. There are problems, and some of them are not trivial. They are not horrific, either. In other words, while there are benefits to this approach, I’ve realized some serious limitations, even though I’m only a few months into this experiment.

The first issue is, honestly, a pretty minor one: It takes a little bit longer to get up and running, as far as development goes. In short, I have to wait for two OSes to load (the host and a guest) before I can get to work. Fortunately, these OSes, being Windows 7, do load quickly, so its only a minor inconvenience.

The second issue is more complicated. I run square into a major issue involving software updates. You know, those annoying message you get from our fine friends at Microsoft that inform you that updates are being installed (actually, I don’t get those, because I refuse to let Microsoft decide when to install updates. I get to choose when.)

Well, each of the individual VMs have this problem, which means that right now I don’t install one update, I install eight (host plus seven guests). And, Java and Adobe, and every other bloke on the block who wants to make sure that their bugs don’t destroy my system(s), want to install updates as well. You get the picture, and it isn’t pretty.

Ok, if you only work in one development environment, say Visual Studio, this will not be an issue. Most of us, though, must support a variety of environments. So if you take the road I did, creating a separate guest OS for each, you're going to have to face this issue (which may be to ignore updates altogether, with the exception of major service packs).

There is another, related issue. What if I find a great new tool (for example, the best merge tool that you’ve ever seen, one that flawlessly and perfectly merges two different versions of the same source file). Or, what if I realize, after creating all of my swell VMs, that I failed to install one of my more useful utilities. Well, at present, I have to install these things separately in each VM. A time consuming task if taken all at once, or an annoyance if done piecemeal each time I discover the missing utility in a particular VM.

I was hoping that a feature of VMWare Workstation, called linked clones, was the answer (it’s not). A linked clone is where you create a clone that is based on an existing VM. When I first started looking into linked clones, I thought my problems were solved. But after reading more closely, the VMWare Workstation help makes it clear that a linked clone is associated with a particular snapshot of an existing VM, and that subsequent changes to the cloned VM do not appear in the clone.

I was really hoping that I could create a linked clone to a base VM, like Database Base, and then perform maintenance only to Database Base. For example, if a Windows update is released, I was hoping I could update only Database Base, and all the linked clones created from it would automatically have the updates. That’s not how it works. Even with linked clones, the individual clones need Windows updates (Oh, the humanity!).

While there is a slight performance decrement when using linked clones (not really an issue; we're not trying to run games on these systems), there is a benefit. Specifically, the individual linked clones, while requiring that you keep around a copy of the original VM that you cloned, take up much less disk space than full clones. For example, one full clone I have takes up 19 GB, while a comparable linked clone consumes 6.5 GB of disk space. This makes the linked clone much more convenient to backup and restore.

What’s The Solution?

As I said, this approach has some serious benefits, but it doesn’t solve all of the problems, either. Is there a perfect solution? I don't know.

Some developers I've talked to have used a system like this, and report that they are very pleased. In those cases, however, they had only one guest OS to maintain. And, either they only needed one development tool, or they installed all of their development environments on the single guest OS. While that might work, these guest OSes tend to get very large, and increase the likelihood that one tool (Visual Studio, for example) may introduce issues with another (say Eclipse).

Other developers I know accept the predictable cycle of re-installation of the OS and all tools. Of these, one or the more compelling approaches involved not only installing all tools and development environments, but also installing all installation CDs and DVDs to a directory or partition on the system. In addition, each time they install a service pack, they first download it to a folder along side the installation disk images.

So long as you a good image of your base operating system, a backup of your installation images, and keep a handy record of your serial numbers, registration keys, and the like, re-installation goes much faster. It still takes time (days?), but it takes much less time than when using disks.

So, while I'm pretty satisfied with my current setup, I'm still looking for something better. I’d like to hear what you think, and what you’ve done to address these issues.

Copyright © 2009 Cary Jensen. All Rights Reserved